Secure AI Factory with FlexPod AI

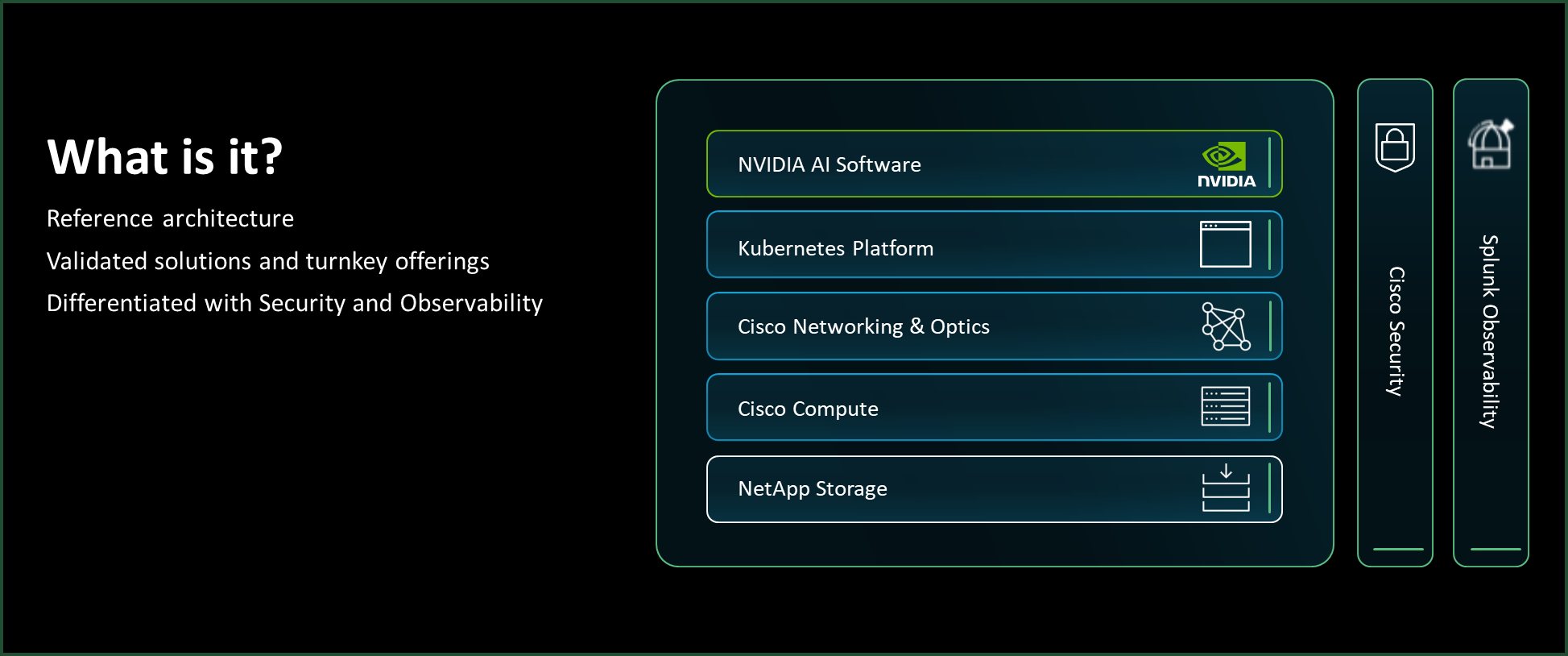

Secure AI Factory represents a new paradigm in enterprise AI infrastructure—one that tightly integrates compute, networking, and storage with security as a foundational element rather than as an afterthought. It provides a standardized, validated, and secure architecture to help enterprises accelerate AI deployment while safeguarding critical data, intellectual property, and operational integrity.

Secure AI Factory represents a new paradigm in enterprise AI infrastructure—one that tightly integrates compute, networking, and storage with security as a foundational element rather than as an afterthought. It provides a standardized, validated, and secure architecture to help enterprises accelerate AI deployment while safeguarding critical data, intellectual property, and operational integrity.

Features and Benefits

The capabilities that set Secure AI Factory with FlexPod AI apart.

The rise of generative AI

Exponential growth of generative AI

Leading AI model categories

Enterprise deployment challenges

- Compute infrastructure. AI workloads require massive parallel processing capabilities, often needing specialized GPUs such as NVIDIA L40S or H100.

- Security. Ensuring the security of AI systems is critical, as they introduce unique attack surfaces ranging from training data pipelines to inference endpoints, which require dedicated security strategies to prevent data breaches, model poisoning, and adversarial attacks.

- Scalability. As models grow, scaling compute, networking, and storage infrastructure without bottlenecks is complex.

- Vendor lock-in. Proprietary software licensing can lock enterprises into inflexible terms, affecting agility and cost efficiency.

The need for security in AI

AI at the heart of mission-critical operations

AI risks

- Data breaches. Training datasets often contain proprietary business information, regulated personal data, or intellectual property. A breach not only risks legal and compliance violations but can also lead to loss of competitive advantage.

- Model poisoning. Attackers can insert malicious data during training to subtly or drastically alter model behavior. This act can result in biased decisions, misinformation generation, or deliberate system sabotage.

- Prompt injection and adversarial attacks. In generative AI systems, crafted prompts or inputs can manipulate the model into producing harmful or unintended outputs. Similarly, adversarial examples—specially modified inputs—can cause models to misclassify or to misinterpret critical information.

- Model theft and reverse engineering. Sophisticated adversaries can attempt to replicate or to steal proprietary AI models through repeated queries, undermining the organization's investment in training and R&D.

- Pipeline and supply chain attacks. AI development pipelines rely on multiple open-source libraries, cloud APIs, and data sources. Compromising any element in this chain can introduce vulnerabilities into the deployed AI systems.

Strategic partnership: Cisco, NVIDIA, and NetApp

Combined strengths of three technology leaders

- Cisco brings best-in-class networking, Zero Trust security frameworks, and workload protection through innovations such as Cisco Secure AI Defense, Hypershield, and Hybrid Mesh Firewall.

- NVIDIA provides the AI acceleration foundation, including GPUs such as L40S and H100, NVIDIA AI Enterprise software, and optimized frameworks for LLMs, generative AI, and deep learning.

- NetApp delivers AI-ready data management and storage, with high-performance NetApp® AFF A-Series systems, data orchestration through NetApp Trident software, and ransomware protection through BlueXP.

Validated, secure, and performance-optimized

FlexPod AI introduction

Core platform of Secure AI Factory

Turnkey solution with NVIDIA

FlexPod AI and Secure AI Factory with NVIDIA

FlexPod AI integrates

- Cisco UCS compute platforms for high-density, GPU-optimized processing.

- Cisco Nexus networking for ultralow-latency and high-bandwidth data flows.

- NetApp AFF all-flash storage for AI-ready, high-performance data pipelines.

- NVIDIA GPUs (such as L40S and H200) and AI Enterprise software for accelerated model training, fine-tuning, and inference.

Workload Security

Cisco Secure AI Defense

Cisco Hypershield

Isovalent Secure Firewall

Infrastructure Security

Cisco Hybrid Mesh Firewall

Data Security

Zero Trust architecture

Multilayered ransomware defense

NetApp AFF A-Series systems

- Ultralow latency

- Parallel I/O optimization

- End-to-end NVMe

Conclusion

Accelerated AI adoption

Thrive with expert-led storage guidance

Get tailored advice on how Secure AI Factory with FlexPod AI fits your environment — from sizing and deployment to long-term optimization.

Technical Specifications

Exhaustive hardware and software metrics extracted directly from official documentation.

-

Compute PlatformCisco UCS compute platforms for high-density, GPU-optimized processing

-

NetworkingCisco Nexus networking for ultralow-latency and high-bandwidth data flows

-

StorageNetApp AFF all-flash storage for AI-ready, high-performance data pipelines

-

Storage FoundationNetApp AFF A-Series systems

-

LatencyUltralow latency

-

I/OParallel I/O optimization

-

ProtocolEnd-to-end NVMe

-

GPUsNVIDIA GPUs (such as L40S and H200)

-

AI SoftwareNVIDIA AI Enterprise software for accelerated model training, fine-tuning, and inference

-

AI Workload SecurityCisco Secure AI Defense

-

Run-time ProtectionCisco Hypershield

-

Container SecurityIsovalent Secure Firewall (eBPF-powered firewalling) for Kubernetes and cloud-native AI workloads

-

Distributed FirewallCisco Hybrid Mesh Firewall for multisite and hybrid cloud environments

-

ArchitectureZero Trust architecture with FlexPod AI

-

HardeningOS- and firmware-level security hardening including secure boot and firmware validation, patch management and vulnerability remediation, and configuration baselines

-

Ransomware DefenseMultilayered ransomware defense including anomaly detection, immutable backups, and rapid recovery

-

Ransomware Protection SoftwareNetApp BlueXP

-

Data OrchestrationNetApp Trident software

Compare Secure AI Factory with FlexPod AI Series

Select the right scale for your workload demands.

| Model Name | Max Capacity | Port Config | Action |

|---|---|---|---|

| FlexPod AI with NVIDIA L40S | NetApp AFF A-Series all-flash storage | Cisco Nexus ultralow-latency, high-bandwidth | Get Quote |

| FlexPod AI with NVIDIA H100 | NetApp AFF A-Series all-flash storage | Cisco Nexus ultralow-latency, high-bandwidth | Get Quote |

| FlexPod AI with NVIDIA H200 | NetApp AFF A-Series all-flash storage | Cisco Nexus ultralow-latency, high-bandwidth | Get Quote |

Learn more

Explore resources

Datasheets, whitepapers, case studies, and technical documentation.

Explore resources